Media Law for the Real World: Copyright, AI, and the First Amendment

An analysis of the Disney and OpenAI deal, and who owns creativity in the age of AI

In December, The Walt Disney Company announced a $1 billion investment in OpenAI, along with a three-year licensing agreement that allows OpenAI’s tools to generate content featuring characters from Disney, Marvel, Star Wars, and Pixar in a limited capacity. Under the deal, users of tools like ChatGPT and Sora will be able to create certain forms of content using Disney’s intellectual property, and Disney employees will use OpenAI’s technology internally to develop new products.

Around the same time the deal was announced, Disney sent a cease-and-desist letter to Google, accusing the company of using their copyrighted material to train its AI models. That contrast alone reveals how unsettled the law is in this space: licensing AI on one hand, while aggressively enforcing copyright on the other. (LinkedIn News Story)

This deal doesn’t just raise questions about technology, it exposes a deeper legal tension between copyright law and the First Amendment — a tension that artificial intelligence makes impossible to ignore.

Copyright constrains everyone, the First Amendment constrains the government

At first glance, it’s tempting to ask whether AI-generated content involving copyrighted characters is protected by the First Amendment. But that framing skips an essential step.

The First Amendment only restricts government action. Disney is not a state actor, and neither is OpenAI. When private companies decide what content to allow, license, or restrict, they are not violating the First Amendment in the constitutional sense.

Copyright, by contrast, applies to everyone. It governs what private individuals can copy, distribute, and transform. Most copyright disputes never become First Amendment cases at all.

But here’s where things get complicated: copyright law itself is created by the government. When Congress writes copyright rules, it is regulating speech, even if it does so indirectly. That means copyright law must be structured in a way that does not suppress lawful expression more than necessary.

That’s where the First Amendment enters the picture.

Where copyright borrows First Amendment values

Courts have long recognized that copyright and free speech exist in a careful balance. Copyright limits speech in the short term to encourage more speech in the long term. That balance only works if copyright law leaves room for commentary, criticism, and parody.

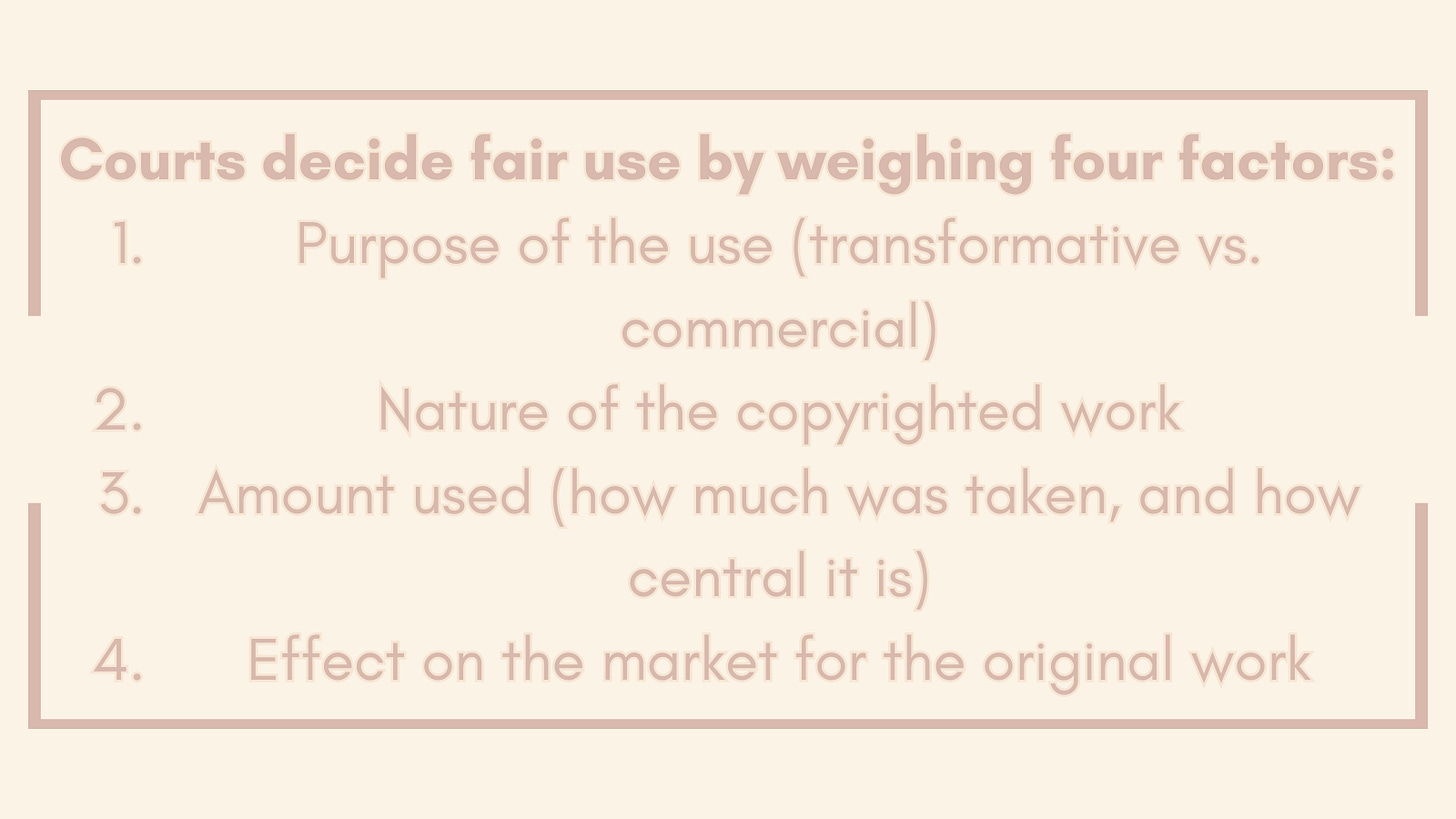

This is why doctrines like fair use exist. Fair use allows certain expressive uses of copyrighted works (even without permission) when those uses are transformative, meaning they add new meaning, message, or purpose.

In parody cases, courts have explicitly recognized that copying may be necessary to make a point. Sometimes, creators have to tolerate uses they dislike because expressive freedom matters more. (Campbell v. Acuff-Rose Music, Inc.)

Importantly, courts have handled this tension within copyright law itself, rather than treating it as a separate First Amendment battle. First Amendment values are filtered through fair use, not used to override copyright entirely.

Why AI breaks the framework

All of this doctrine assumes something critical: a human speaker.

Fair use analysis depends on questions like:

Who is expressing something?

What is their purpose?

Are they commenting on the original work?

Are they transforming it meaningfully?

AI complicates every one of those questions.

When a user prompts an AI system to generate content featuring a copyrighted character, who is the “speaker”? Is it the user? The AI company? The model trained on vast amounts of data? Who has the intent to parody? Who is responsible for the meaning of the output?

The existing framework was not built for machine-generated expression at a large scale. AI doesn’t just copy — it reproduces style, tone, and recognizable expression instantly and endlessly. That destabilizes the balance copyright law relies on.

Why the Disney/OpenAI deal makes sense

From Disney’s perspective, the deal is pragmatic. In the age of generative AI, people are already creating images, videos, and stories involving copyrighted characters, often without permission and without compensation to the rights holder. The question is no longer whether this content will exist, but under what conditions.

Licensing allows Disney to retain some control over how its characters are used, receive compensation for that use, and limit the most extreme or damaging forms of misuse. It’s a way to manage a reality that copyright law alone can no longer fully prevent.

For OpenAI, licensing reduces legal uncertainty. Copyright law was not designed with generative AI in mind, and courts are still grappling with whether training AI models on copyrighted material qualifies as fair use. (Thomson Reuters v. ROSS, Kadrey v. Meta) A licensing agreement offers a clearer legal foundation than relying entirely on unsettled doctrine.

In that sense, the deal reflects a lesser-of-two-evils approach: regulated access with compensation versus unregulated use through loopholes that offer creators no protection at all.

What this means for human creators

The more uncomfortable questions emerge when we consider what this means for people whose livelihoods depend on creative work.

If AI systems can generate content using licensed characters, voices, or styles, studios could theoretically reduce reliance on human actors, writers, and artists. Production costs could drop, timelines could shrink, and entire roles in the creative process could be replaced by synthesis.

Expression, parody, and association

AI-generated content involving copyrighted characters can still be considered a form of expression. Offensive or explicit expression does not lose its expressive character simply because it is uncomfortable.

But expression does not mean immunity. As mentioned earlier, for a use to qualify as parody, it must comment on or critique the original work. Many AI-generated uses do not do that. They rely on recognizable characters for attention, not commentary.

That distinction helps explain creators’ discomfort. When a recognizable character appears in obscene or profane content, it can feel like an unwanted association where the work is being used to speak values the creator never agreed to express.

With AI, control is eroding and intent is blurring, leaving enforcement harder to come by.

Why lawmakers are stuck

The moment the government steps in to regulate AI-generated content, the First Amendment becomes unavoidable.

If lawmakers prohibit broad categories of AI-generated expression, they risk suppressing lawful parody and commentary. If they grant copyright holders absolute control over all AI uses, they risk chilling expressive speech that copyright law has traditionally allowed.

Copyright assumes scarcity, but AI creates abundance. The law has to work to reconcile those realities.

The real question AI forces us to confront

At its core, this debate isn’t really about Disney or OpenAI, it’s about ownership in a world where creativity can be automated and reproduced endlessly.

Copyright law has always balanced private ownership and public expression. Artificial intelligence destabilizes that balance by removing the human author from the center of the analysis.

That’s why this issue feels so difficult. The law isn’t necessarily behind, but it is standing at a breaking point.